- If AMD’s latest high-end chip, the MI300X, is good enough and inexpensive enough for the technology companies and cloud providers that build and serve AI models when it starts shipping early next year, it could drive down AI model development costs.

- AMD CEO Lisa Su expects the AI chip market to reach $400 billion or more in 2027, and hopes AMD will capture a significant portion of that market.

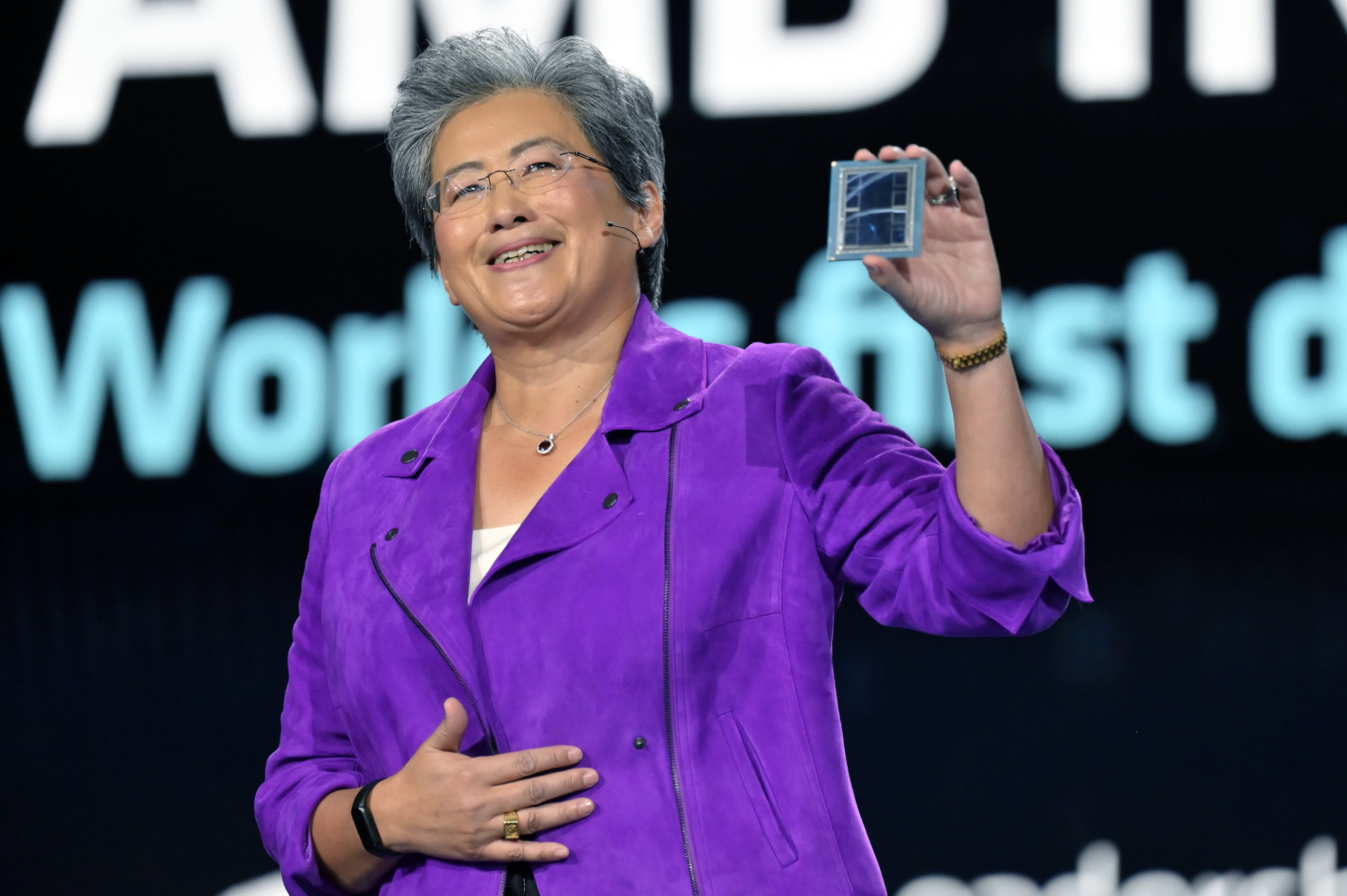

Lisa Su shows the AMD Instinct MI300 chip during a keynote speech at CES 2023 in Las Vegas, Nevada, January 4, 2023

David Becker | Getty Images

Meta, OpenAI and Microsoft said at an AMD investor event on Wednesday that they will use AMD’s latest AI chip, the Instinct MI300X. It’s the biggest sign yet that tech companies are looking for alternatives to expensive Nvidia graphics processors that have been needed to create and deploy AI software like OpenAI’s ChatGPT.

If AMD’s latest high-end chips are good enough for the tech companies and cloud providers that build and serve AI models when it starts shipping early next year, it could lower AI model development costs, and put competitive pressure on Nvidia’s growing AI chip sales. . growth.

“All the attention is on big hardware and big GPUs for the cloud,” AMD CEO Lisa Su said on Wednesday.

AMD says the MI300X is based on a new architecture, which often results in significant performance gains. The most unique feature is that it has 192GB of an advanced, high-performance type of memory known as HBM3, which transfers data faster and can fit larger AI models.

At an analyst event on Wednesday, CEO Lisa Su directly compared the Instinct MI300X and systems built with it to Nvidia’s flagship AI GPU, the H100.

“What this performance does is it directly translates into a better user experience,” Su said. “When you ask a model something, you want them to respond faster, especially when the answers become more complex.”

The main question facing AMD is whether companies that have been relying on Nvidia will invest the time and money to add another GPU supplier. “AMD adoption takes work,” Su said.

AMD on Wednesday told investors and partners that it has improved its software suite called ROCm to compete with Nvidia’s industry-standard CUDA software, addressing a key drawback that has been one of the main reasons AI developers currently prefer Nvidia.

Price will also matter — AMD didn’t reveal pricing for the MI300X on Wednesday, but Nvidia’s chip can cost about $40,000 for a single chip, and Su told reporters that AMD’s chip would have to cost less to buy and run than Nvidia’s in order to convince. customers to buy it.

AMD MI300X AI Accelerator.

AMD said Wednesday that it has already signed up some of the companies hungrier for GPUs to use the chip. Meta and Microsoft were the biggest buyers of Nvidia H100 GPUs in 2023, according to A recent report from research company Omidia.

Meta said it will use Instinct MI300X GPUs for AI inference workloads such as processing AI labels, editing images, and running its assistant. Microsoft CTO Kevin Scott said it will provide access to the MI300X chips through its Azure web service. Oracle Cloud will also use chips.

OpenAI said it will support AMD GPUs in one of its software products called Triton, which isn’t a big language model like GPT, but is used in AI research to access chip features.

AMD hasn’t yet forecast huge sales for the chip yet, and expects only about $2 billion in total data center GPU revenue in 2024. Nvidia reported more than $14 billion in data center sales in the last quarter alone, though This scale includes other segments besides it. Graphics processing units.

However, AMD says the total market for AI-powered GPUs could rise to $400 billion over the next four years, doubling the company’s previous forecast, illustrating just how high expectations are and how desirable advanced AI chips are becoming — and why they’re focusing on… The company now has investors’ attention on the production line. Su also suggested to reporters that AMD does not believe it needs to beat Nvidia to do well in the market.

“I think it’s clear to say that Nvidia should be the vast majority of that now,” Su told reporters, referring to the AI chip market. “We think it could reach $400 billion in 2027. We can get a big chunk of that.”

“Web specialist. Lifelong zombie maven. Coffee ninja. Hipster-friendly analyst.”